WavebreakmediaMicro - Fotolia

What are the top software testing methodologies?

Whether you want to discover new software testing methodologies or rejuvenate test cases, QA is all about efficiency. Evaluate these testing techniques and strategies to meet QA goals.

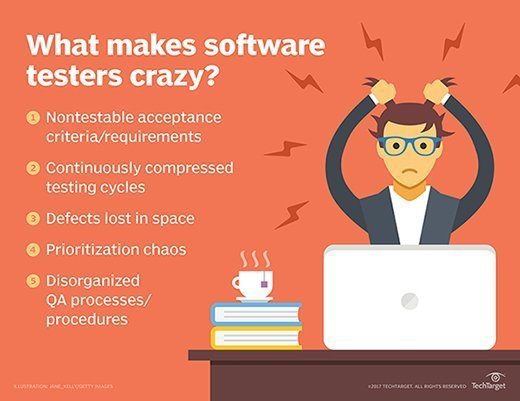

The software testing methodologies that QA teams use always change because of business requirements or constraints. Agile processes, for instance, enable better responsiveness to customer demand than the Waterfall model and meet the need for speed at every level.

QA testing is not exempt from change, nor should it be. Software testing methodologies must modernize in several key ways to keep up with the pace of the development process. Rapid software testing is a big focus for many mature DevOps shops.

The popular testing types detailed below are either new takes on a traditional test strategy or an improved process that eats up less time than before. And due to the interconnectedness of applications and data, there's one testing technique you can simply never skip.

Feature equals function

Feature testing, also called story testing, has replaced -- and reimagined -- functional testing. But what pits feature testing vs. functional testing?

Functional testing verifies that applications function as designed, despite additions or other changes. Feature testing incorporates the idea of Agile user stories and changes the focus to be less on function and more on proving the feature works when integrated with the full application. Because features can be broken into multiple tasks in the kanban or Scrum development model, a full feature is tested in small pieces over a shorter period of time against the full application code. Testers concentrate on the feature over the course of a release cycle to ensure the final product performs as expected.

Regression switches to smoke and release

Regression testing has changed exponentially over recent years. Regression tests no longer take a matter of weeks, where every conceivable scenario is executed. This step is now a smoke test that ensures the basics of the software function as expected.

Modern regression testing includes automated unit tests at the code level. QA engineers can also apply test automation on a set of back-end unit tests to check anything, from API connectivity to data exchange success. Though, with regression testing, QA engineers should implement both test automation and manual testing techniques.

Manual smoke tests evaluate if the software's most critical capabilities work -- the name comes from whether a product catches fire when it is turned on. Regression testing in this manner occurs in a matter of days, most frequently as a continuous testing process.

Modern regression testing also includes release testing, wherein the QA tester confirms all the items that make up a release are present in the code. Release testing is similar to a second round of traditional regression testing, which ensures released features exist in the code and function together as expected.

Integration is everything

Integration testing has become a widely prioritized software testing methodology. Testers can no longer skip or ignore the process, because nearly every application shares data with multiple partners across secured API structures. In modern development and test practices, integration testing is continuous. It's built automatically into feature testing and occurs as well during smoke and release testing.

To many modern software teams, the process might not even go by the name integration testing anymore. Productivity workers and consumers rely on multiple apps working seamlessly at the same time, so every little thing is integrated.

Modern software testing methodologies are good news for QA teams, because their adoption provides an opportunity to grow and expand skill sets, while moving at a much faster pace with leaner practices. QA teams should use continuous testing, automated unit tests and other strategies with these updated test types to keep up with the business realities of application development.