quality assurance (QA)

Quality assurance (QA) is any systematic process of determining whether a product or service meets specified requirements.

QA establishes and maintains set requirements for developing or manufacturing reliable products. A quality assurance system is meant to increase customer confidence and a company's credibility, while also improving work processes and efficiency, and it enables a company to better compete with others.

The ISO (International Organization for Standardization) is a driving force behind QA practices and mapping the processes used to implement QA. QA is often paired with the ISO 9000 international standard. Many companies use ISO 9000 to ensure that their quality assurance system is in place and effective.

The concept of QA as a formalized practice started in the manufacturing industry, and it has since spread to most industries, including software development.

Importance of quality assurance

Quality assurance helps a company create products and services that meet the needs, expectations and requirements of customers. It yields high-quality product offerings that build trust and loyalty with customers. The standards and procedures defined by a quality assurance program help prevent product defects before they arise.

Quality assurance methods

Quality assurance utilizes one of three methods:

- Failure testing, which continually tests a product to determine if it breaks or fails. For physical products that need to withstand stress, this could involve testing the product under heat, pressure or vibration. For software products, failure testing might involve placing the software under high usage or load conditions.

- Statistical process control (SPC), a methodology based on objective data and analysis and developed by Walter Shewhart at Western Electric Company and Bell Telephone Laboratories in the 1920's and 1930's. This methodology uses statistical methods to manage and control the production of products.

- Total quality management (TQM), which applies quantitative methods as the basis for continuous improvement. TQM relies on facts, data and analysis to support product planning and performance reviews.

QA vs. QC

Some people may confuse the term quality assurance with quality control (QC). Although the two concepts share similarities, there are important distinctions between them.

In effect, QA provides the overall guidelines used anywhere, and QC is a production-focused process – for things such as inspections. QA is any systematic process for making sure a product meets specified requirements, whereas QC addresses other issues, such as individual inspections or defects.

In terms of software development, QA practices seek to prevent malfunctioning code or products, while QC implements testing and troubleshooting and fixes code.

History of ISO and QA

Although simple concepts of quality assurance can be traced back to the Middle Ages, QA practices became more important in the United States during World War II, when high volumes of munitions had to be inspected.

The ISO opened in Geneva in 1947 and published its first standard in 1951 on reference temperatures for industrial measurements. The ISO gradually grew and expanded its scope of standards.

The ISO 9000 family of standards was published in 1987; each 9000 number offers different standards for different scenarios.

QA standards

QA standards have changed and been updated over time, and ISO standards need to change in order to stay relevant to today's businesses.

The latest in the ISO 9000 series is ISO 9001:2015. The guidance in ISO 9001:2015 includes a stronger customer focus, top management practices and how they can change a company, and keeping apace of continuing improvements. Along with general improvements to ISO 9001, ISO 9001:2015 includes improvements to its structure and more information for risk-based decision-making.

Quality assurance in software

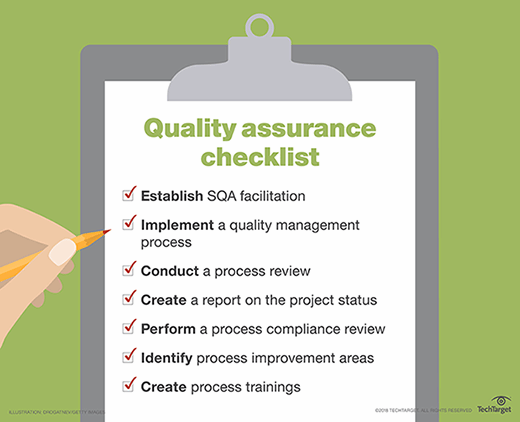

Software quality assurance (SQA) systematically finds patterns and the actions needed to improve development cycles. Finding and fixing coding errors can carry unintended consequences; it is possible to fix one thing, yet break other features and functionality at the same time.

SQA has become important for developers as a means of avoiding errors before they occur, saving development time and expenses. Even with SQA processes in place, an update to software can break other features and cause defects -- commonly known as bugs.

There have been numerous SQA strategies. For example, Capability Maturity Model Integration (CMMI) is a performance improvement-focused SQA model. CMMI works by ranking maturity levels of areas within an organization, and it identifies optimizations that can be used for improvement. Rank levels range from being disorganized to being fully optimal.

Software development methodologies have developed over time that rely on SQA, such as Waterfall, Agile and Scrum. Each development process seeks to optimize work efficiency.

waterfall is the traditional linear approach to software development. It's a step-by-step process that typically involves gathering requirements, formalizing a design, implementing code, code testing and remediation and release. It is often seen as too slow, which is why alternative development methods were constructed.

Agile is a team-oriented software development methodology where each step in the work process is approached as a sprint. Agile software development is highly adaptive, but it is less predictive because the scope of the project can easily change.

Scrum is a combination of both processes where developers are split into teams to handle specific tasks, and each task is separated into multiple sprints.

To implement a QA system, first set goals for the standard. Consider the advantages and tradeoffs of each approach, such as maximizing efficacy, reducing cost or minimizing errors. Management must be willing to implement process changes and to work together to support the QA system and establish standards for quality.

QA team

A portion of careers in SQA include job options like SQA engineers, SQA Analyst and SQA test automation. SQA engineers monitor and test software through development. An SQA Analyst will monitor the implication and practices of SQA over software development cycles. SQA test automation requires the individual to create programs to automate the SQA process.

These programs compare predicted outcomes with actual outcomes. This work is used for constant testing.

SQA tools

Software testing is an integral part of software quality assurance. Testing saves time, effort and cost, and it enables a quality end product to be optimally produced. There are numerous software tools and platforms that developers can employ to automate and orchestrate testing in order to facilitate SQA goals.

Selenium is an open source software testing program that can run tests in a variety of popular software languages, such as C#, Java and Python.

Another open source program, called Jenkins, enables developers and QA staff to run and test code in real time. It's well-suited for a fast-paced environment because it automates tasks related to the building and testing of software.

For web apps or application program interfaces (APIs), Postman will automate and run tests. It is available for Mac, Windows and Linux, and it can support Swagger and RAML formatting.

QA uses by industry

The following are a few examples of quality assurance in use by industries:

- Manufacturing, the industry that formalized the quality assurance discipline. Manufacturers need to ensure that assembled products are created without defects and meet the defined product specifications and requirements.

- Food production, which uses X-ray systems, among other techniques, to detect physical contaminants in the food production process. The X-ray systems ensure that contaminants are removed and eliminated before products leave the factory.

- Pharmaceutical, which employs different quality assurance approaches during each stage of a drug's development. Across the different stages, the QA processes include reviewing documents, approving equipment calibration, reviewing training records, reviewing manufacturing records and investigating market returns.

QA vs. testing

QA is different from testing. QA is more focused around processes and procedures, while testing is focused on the logistics of using a product in order to find defects. QA defines the standards around testing to ensure that a product meets defined business requirements. Testing involves the more tactical process of validating the function of a product and identifying issues.

Pros and cons of QA

The quality of products and services is a key competitive differentiator. Quality assurance helps ensure that organizations create and ship products that are clear of defects and meet the needs and expectations of customers. High-quality products result in satisfied customers, which can result in customer loyalty, repeat purchases, upsell and advocacy.

Quality assurance can lead to cost reductions stemming from the prevention of product defects. If a product is shipped to customers and a defect is discovered, an organization incurs cost in customer support, such as receiving the defect report and troubleshooting. It also acquires the cost in addressing the defect, such as service or engineering hours to correct it, testing to validate the fix and cost to ship the updated product to the market.

QA does require a substantial investment in people and process. People must define a process workflow and oversee its implementation by members of a QA team. This can be a time-consuming process that impacts the delivery date of products. With few exceptions, the disadvantage of QA is more a requirement -- a necessary step that must be undertaken to ship a quality product. Without QA, more serious disadvantages arise, such as product bugs and the market’s dissatisfaction or rejection of the product.