10 application performance metrics and how to measure them

You've deployed your application, now what? To keep your application performing well, you need to track various metrics. Take a look at these ten critical KPIs.

Application performance metrics are important for deciphering the extent to which an application actually helps the business it supports and revealing where improvements are needed. The key to success is to track the right metrics for your app.

These application performance metrics, commonly known as key performance indicators (KPIs), are a quantitative measure of how effectively the organization achieves the business objectives. Capturing the right metrics will give you a comprehensive report and powerful insights into ways to improve your application.

Below are 10 core application performance metrics that developers should track.

1. CPU usage

CPU usage affects the responsiveness of an application. High spikes in CPU usage might indicate several problems. Specifically, this suggests the application is busy spending time on computing, causing the responsiveness of an application to degrade. High spikes in usage should be considered a performance bug because it means the CPU has reached its usage threshold.

2. Memory usage

Memory usage is also an important application performance metric. High memory usage indicates high resource consumption in the server. When tracking an application's memory usage, keep an eye on the number of page faults and disk access times. If you have allocated inadequate virtual memory, then your application is spending more time on thrashing than anything else.

This article is part of

What is APM? Application performance monitoring guide

3. Requests per minute and bytes per request

Tracking the number of requests your application's API receives per minute can help determine how the server performs under different loads. It's equally important to track the amount of data the application handles during every request. You might find that the application receives more requests than it can manage or that the amount of data it is forced to handle is hurting performance.

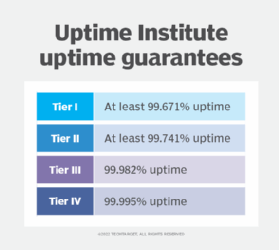

4. Latency and uptime

Latency -- usually measured in milliseconds -- refers to the delay between a user's action on an application and the response of the application to that action. Higher latency has a direct effect on the load time of an application. You should take advantage of a ping service to test uptime. These services can be configured to run at specific intervals of time to determine whether an application is up and running.

5. Security exposure

You should ensure that both your application and data are safe. Determine how much of the application is covered by security techniques and how much is exposed and unsecure. You should also have a plan in place to determine how much time it takes -- or might take -- to resolve certain security vulnerabilities.

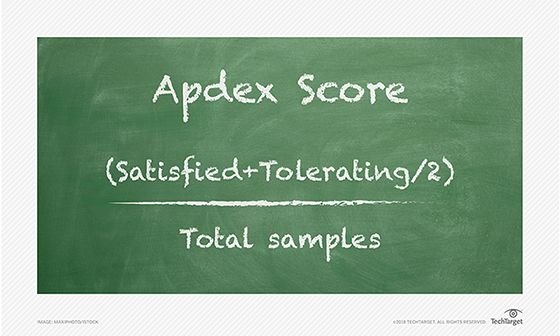

6. User satisfaction/Apdex scores

Application Performance Index (Apdex) is an open standard that measures web applications' response times by comparing them against a predefined threshold. It's calculated as the ratio of satisfactory to unsatisfactory response times. The response time is the time taken by an asset to be returned to the requestor after being requested.

Here's an example: Assume that you've defined a time threshold of T. Hence, all responses completed in T or less time are considered to have satisfied the user. On the contrary, responses that have taken more than T seconds are considered to have dissatisfied the user.

Apdex defines three types of users based on user satisfaction:

- Satisfied. This rating represents users who experienced satisfactory or high responsiveness.

- Tolerating. This rating represents users who have experienced slow but acceptable responsiveness.

- Frustrated. This rating represents users who have experienced unacceptable responsiveness.

You can calculate the Apdex score with the following formula, where SC denotes satisfied count, TC denotes tolerating count, FC denotes frustrated count and TS denotes total samples:

Apdex = (SC + TC/2 + FC × 0)/TS

Assuming a data set of 100 samples, where you've set a performance objective of 5 seconds or better, suppose 65 are below 5 seconds, 25 are between 5 and 10 seconds and the remaining 10 are above 10 seconds. With these parameters you can determine the Apdex score as follows:

Apdex = (65 + (25/2) + (10 × 0))/100 = 0.775

7. Average response time

The average response time is calculated by averaging the response times for all requests over a specified period of time. A low average response time implies better performance, as the application or server has taken less time to respond to requests or inputs.

The average response time is determined by dividing the time taken to respond to the requests in a given time period by the total number of responses during the same period.

8. Error rates

This performance metric measures the percentage of requests that have errors compared to your total number of requests in a given time frame. Any spike in this number will indicate that you'll likely encounter a major failure soon.

You can track application errors using the following indicators:

- Logged exceptions. This indicator represents the number of unhandled and logged errors.

- Thrown exceptions. This indicator represents the total of all exceptions thrown.

- HTTP error percentage. This indicator represents the number of web requests that were unsuccessful and that returned error.

In essence, you can take advantage of error rates to monitor how often your application fails in real time. You can also keep an eye on this performance metric to detect and fix errors quickly, before you run into problems that can bring your entire site down.

9. Garbage collection

Garbage collection can cause your application to halt for a while when the GC cycle is in progress. Additionally, it can also use a lot of CPU cycles, so it's imperative that you determine garbage collection performance in your application.

To quantify garbage collection performance, you can use the following metrics:

- GC handles. This metric counts the total number of object references created in an application.

- Percentage time in GC. This is a percentage of the time elapsed in garbage collection since the last GC cycle.

- Garbage collection pause time. This measures the time the entire application pauses during a GC cycle. You can reduce the pause time by limiting the number of objects that need to be marked -- i.e., objects that are candidates for garbage collection.

- Garbage collection throughput. This measures the percentage of the total time the application has not spent on garbage collection.

- Object creation/reclamation rate. This is a measure of the rate at which instances are created or reclaimed in an application. The higher the object creation rate, the more frequent GC cycles will be, consequently increasing CPU utilization.

KPIs for APIs

API analysis and reporting are important aspects of app development, and APIs have their own set of KPIs that development teams need to track.

Some of the most important KPIs for APIs to pay attention to include:

- Usage count. This indicates the number of times an API call is made over a certain period of time.

- Request latency. This indicates the amount of time it takes for an API to process incoming requests.

- Request size. This indicates the size of incoming request payloads.

- Response size. This indicates the size of outgoing response payloads.

10. Request rates

Request rate is an essential metric that provides insights into the increase and decrease in traffic experienced by your application. In other words, it provides insights into the inactivity and spikes in traffic that your application receives. You can correlate request rates with other application performance metrics to determine how your application can scale. You should also keep an eye on the number of concurrent users in your application.

Conclusion

Managing and reviewing application metrics makes meaningless bits of technical info into an easy-to-understand narrative that reveals the system's reliability and gives insight into the user experience.

For more information on application performance monitoring, read 5 benefits of APM for businesses and Using AI and machine learning for APM.