application security

What is application security?

Application security, or appsec, is the practice of using security software, hardware, techniques, best practices and procedures to protect computer applications from external security threats.

Security was once an afterthought in software design. Today, it's an increasingly critical concern for every aspect of application development, from planning through deployment and beyond. The volume of applications developed, distributed, used and patched over networks is rapidly expanding. As a result, application security practices must address an increasing variety of threats.

How does application security work?

Security measures include improving security practices in the software development lifecycle and throughout the application lifecycle. All appsec activities should minimize the likelihood that malicious actors can gain unauthorized access to systems, applications or data. The ultimate goal of application security is to prevent attackers from accessing, modifying or deleting sensitive or proprietary data.

Any action taken to ensure application security is a countermeasure or security control. The National Institute of Standards and Technology (NIST) defines a security control as: "A safeguard or countermeasure prescribed for an information system or an organization designed to protect the confidentiality, integrity, and availability of its information and to meet a set of defined security requirements."

An application firewall is a countermeasure commonly used for software. Firewalls determine how files are executed and how data is handled based on the specific installed program. Routers are the most common countermeasure for hardware. They prevent the Internet Protocol (IP) address of an individual computer from being directly visible on the internet.

Other countermeasures include the following:

- conventional firewalls

- encryption and decryption programs

- antivirus programs

- spyware detection and removal programs

- biometric authentication systems

Why is application security important?

Application security -- including the monitoring and managing of application vulnerabilities -- is important for several reasons, including the following:

- Finding and fixing vulnerabilities reduces security risks and doing so helps reduce an organization's overall attack surface.

- Software vulnerabilities are common. While not all of them are serious, even noncritical vulnerabilities can be combined for use in attack chains. Reducing the number of security vulnerabilities and weaknesses helps reduce the overall impact of attacks.

- Taking a proactive approach to application security is better than reactive security measures. Being proactive enables defenders to identify and neutralize attacks earlier, sometimes before any damage is done.

- As enterprises move more of their data, code and operations into the cloud, attacks against those assets can increase. Application security measures can help reduce the impact of such attacks.

Neglecting application security can expose an organization to potentially existential threats.

What is threat modeling?

Threat modeling or threat assessment is the process of reviewing the threats to an enterprise or information system and then formally evaluating the degree and nature of the threats. Threat modeling is one of the first steps in application security and usually includes the following five steps:

- rigorously defining enterprise assets;

- identifying what each application does or will do with respect to these assets;

- creating a security profile for each application;

- identifying and prioritizing potential threats; and

- documenting adverse events and the actions taken in each case.

In this context, a threat is any potential or actual adverse event that can compromise the assets of an enterprise. These include both malicious events, such as a denial-of-service attack, and unplanned events, such as the failure of a storage device.

Common application security weaknesses and threats

The most common application security weaknesses are well-known. Various organizations track them over time. The Open Web Application Security Project (OWASP) Top Ten list and the Common Weakness Enumeration (CWE) compiled by the information security community are two of the best-known lists of application weaknesses.

The OWASP list focuses on web application software. The CWE list focuses on specific issues that can occur in any software context. Its goal is to provide developers with usable guidance on how to secure their code.

The top 10 items on the CWE list and their CWE scores are the following:

| CWE |

Description |

CWE score |

| Out-of-bounds write |

Software that improperly writes past a memory boundary can cause data corruption, system crash or enable malicious code execution. |

65.93 |

| Improper neutralization of potentially harmful input during webpage automation enables attackers to hijack website users' connections. |

46.84 |

|

| Out-of-bounds read |

Software that improperly reads past a memory boundary can cause a crash or expose sensitive system information that attackers can use in other exploits. |

24.9 |

| Lack of validation or improper validation of input or data enables attackers to run malicious code on the system. |

20.47 |

|

| Operating system (OS) command injection |

Improper neutralization of potentially harmful elements used in an OS command. |

19.55 |

| Software that doesn't properly neutralize potentially harmful elements of a SQL command. These flaws enable attacks against databases. |

19.54 |

|

| Use after free |

Software that references memory that had been freed can cause the program to crash or enable code execution. |

16.83 |

| Path traversal (directory traversal) |

Improper limitation of a pathname to a restricted directory. |

14.69 |

| cross-site request forgery |

When a web app fails to validate that a user request was intentionally sent, it may expose data to attackers or enable remote malicious code execution. |

14.46 |

| Unrestricted upload of malware or other potentially dangerous files |

Software that permits unrestricted file uploads opens the door for attackers to deliver malicious code for remote execution. |

8.45 |

Application weaknesses can be mitigated or eliminated and are under control of the organization that owns the application. Threats, on the other hand, are generally external to the applications. Some threats, like physical damage to a data center due to adverse weather or an earthquake, are not explicitly malicious acts. However, most cybersecurity threats are the result of malicious actors' actions taken.

What follows is the OWASP Top Ten list of web application security risks, updated most recently in 2021.

| 2021 rank |

Description |

2017 rank |

| 1 |

Broken access control refers to vulnerabilities that enable attackers to elevate their own permissions or otherwise bypass access controls to gain access to data or systems they are not authorized to use. |

5 |

| 2 |

Cryptographic failures refer to vulnerabilities caused by failures to apply cryptographic solutions to data protection. This includes improper use of obsolete cryptographic algorithms, improper implementation of cryptographic protocols and other failures in using cryptographic controls. |

3 |

| 3 |

Injection flaws enable attackers to submit hostile data to an application. This includes crafted data that incorporates malicious commands, redirects data to malicious web services or reconfigures applications. |

1 |

| 4 |

Insecure design includes risks incurred because of system architecture or design flaws. These flaws relate to the way the application is designed, where an application relies on processes that are inherently insecure. Examples include architecting an application with an insecure authentication process or designing a website that does not protect against bots. |

New |

| 5 |

Security misconfiguration flaws occur when an application's security configuration enables attacks. These flaws involve changes related to applications filtering inbound packets, enabling a default user ID, password or default user authorization. |

6 |

| 6 |

Vulnerable and outdated components relate to an application's use of software components that are unpatched, out of date or otherwise vulnerable. These components can be a part of the application platform, as in an unpatched version of the underlying OS or an unpatched program interpreter. They can also be part of the application itself as with old application programming interfaces or software libraries. |

9 |

| 7 |

Identification and authentication failures encompass authentication weaknesses, including flaws that enable credential stuffing and brute force attacks, or that lack support for multifactor authentication and invalidation of expired or inactive user sessions. |

2 |

| 8 |

Software and data integrity failures covers vulnerabilities related to application code and infrastructure that fails to protect against violations of data and software integrity. For example, when software updates are delivered and installed automatically without a mechanism like a digital signature to ensure the updates are properly sourced. |

New |

| 9 |

Security logging and monitoring failures include failures to monitor systems for all relevant events and maintain logs of these events to detect and respond to active attacks. |

10 |

| 10 |

Server-side request forgery refers to flaws that occur when an application does not validate remote resources users provide. Attackers use these vulnerabilities to force applications to access malicious web destinations. |

New |

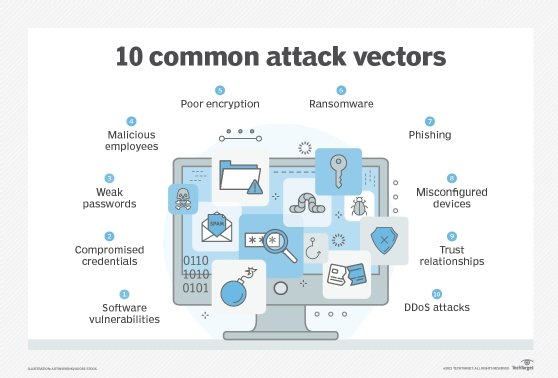

Threats exploit weaknesses and vulnerabilities. Common application security threats include the following:

- Software injection attacks exploit vulnerabilities in application code that enable attackers to insert code into the application through ordinary user input.

- Cross-site scripting attacks exploit vulnerabilities in the way web applications handle cookies to steal or forge cookies so that the attacker can impersonate authorized users.

- Buffer overflow attacks exploit vulnerabilities in the way applications store working data in system buffers. Secure development best practices minimize these attacks. These include using data validation and programming languages that safely manage memory allocations, keeping software updated with the latest patches and relying on the principal of least privilege.

Common categories of application security

Applications can be categorized in different ways; for example, as specific functions, such as authentication or appsec testing. They can also be divided according to domains, like application security for web, mobile, internet of things (IoT) and other embedded applications.

Security professionals use different tactics and strategies for application security, depending on the application being developed and used. Application security measures and countermeasures can be characterized functionally, by how they are used, or tactically, by how they work.

Application security controls can be classified in different ways, as well. One approach is to categorize them based on what they do.

- Application security testing controls help keep weaknesses and vulnerabilities out of the application as it is being developed.

- Access control safeguards prevent unauthorized access to applications. This protects against hijacking of authenticated user accounts as well as inadvertently giving access to restricted data to an authenticated user who is not authorized to access it.

- Authentication controls are used to ensure that users or programs accessing application resources are who or what they say they are.

- Authorization controls are used to ensure that users or programs that have been authenticated are actually authorized to access application resources. Authorization and authentication controls are closely related and often implemented with the same tools.

- Encryption controls are used to encrypt and decrypt data that needs to be protected. Encryption controls can be implemented at different layers for networked applications. For example, an application can implement encryption within the application itself by encrypting all user input and output. Alternately, an application can rely on encryption controls such as those provided by network layer protocols, like IP Security or IPsec, which encrypt data being transmitted to and from the application.

- Logging controls are used to track application activities. They are indispensable for maintaining accountability. Without logging, it can be difficult or impossible to identify what resources an attack has exposed. Comprehensive application logs are also an important control for testing application performance.

Another way to classify application security controls is how they protect against attacks.

- Preventative controls are used to keep attacks from happening. Their objective is to protect against vulnerabilities. For example, access control and encryption are often used to prevent unauthorized users from accessing sensitive information; comprehensive application security testing is another preventive control that is applied in the software development lifecycle.

- Corrective controls reduce the effect of attacks or other incidents. For example, using virtual machines, terminating malicious or vulnerable programs, or patching software to eliminate vulnerabilities are all corrective controls.

- Detective controls are fundamental to a comprehensive application security architecture because they may be the only way security professionals are able to determine an attack is taking place. Detective controls include intrusion detection systems, antivirus scanners and agents that monitor system health and availability.

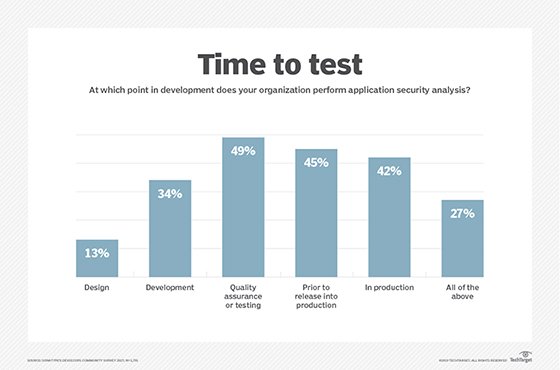

The process of securing an application is ongoing, from the earliest stages of application design to ongoing monitoring and testing of deployed applications. Security teams use a broad range of tools and testing practices.

Application security testing and tools

Tools and techniques used for application security are almost as numerous and diverse as those used for application development.

Most of these tools and techniques fall into one of the following four categories:

- Secure development platforms help developers avoid security issues by imposing and enforcing standards and best practices for secure development.

- Code scanning tools enable developers to review new and existing code for potential vulnerabilities or other exposures.

- Application testing tools automate the testing of finished code. Application testing tools can be used during the development process, or they can be applied to existing code to identify potential issues. Application testing tools can be used for static, dynamic, mobile or interactive testing.

- Application shielding tools are used to protect applications that are in release. Some examples include the following:

- threat detection tools to examine the network and environment in which an application is running, and flag vulnerabilities and potential threats;

- encryption tools for protecting data from interception;

- code obfuscation tools to make source code hard or impossible to decipher and reverse engineer; and

- runtime application self-protection tools, which combine elements of application testing tools and application shielding tools to enable continuous monitoring of an application.

Of course, application security exists within the context of OSes, networks and other related infrastructure components that must also be secured. To be fully secure, an application should be protected from all types of attack.

Best practices for application security

Best practices for application security fall into several general categories.

- What must be protected? Experts recommend security professionals map out all of the systems, software and other computing resources -- in the cloud and on premises -- that must a part of the application.

- What is the worst that can happen? Experts recommend understanding and quantifying what is at stake if the worst does happen. This enables organizations to allocate resources appropriately for avoiding risk.

- What could happen? How could a successful attack be carried out? Threats are the things that could negatively affect the application, the organization deploying the application or the application users.

Specific tips for application security best practices focus on identifying general weaknesses and vulnerabilities and addressing them. Other best practices depend on applying specific practices like adopting a security framework or implementing secure software development practices appropriate for the application type.

Application security trends and future

While the concepts of application security are well understood, they are still not always well implemented. Security experts have had to adjust as the computing changed. For example, as the industry shifted from time-shared mainframes to networked personal computers, application security professionals had to change how they identified and addressed the most urgent vulnerabilities.

Now, as companies are moving more information assets and resources to the cloud, application security is shifting its focus. Likewise, as application developers increasingly rely on automation, machine learning and artificial intelligence, so too will application security professionals need to incorporate those technologies into their own tools.

As the risks of deploying insecure applications increase, application developers will also increasingly find themselves working with development tools and techniques that can help guide secure development.

Glossary of application security terms

Dynamic application security testing (DAST). Testing methodology that analyzes applications as they are running. DAST focuses on inputs and outputs and how the application reacts to malicious or faulty data.

Exploit. A method where attackers take advantage of a vulnerability to gain access to protected or sensitive resources. An exploit can use malware, rootkits or social engineering to take advantage of vulnerabilities.

Instrumented or interactive application security testing (IAST). A testing methodology that combines the best features of static application security testing (SAST) and DAST, analyzing source code, running applications, configurations, HTTP traffic and more.

Penetration testing. Testing methodology that depends on ethical hackers who use hacking methods to assess security posture and identify possible entry points to an organization's infrastructure -- at the organization's request.

Risk. The potential cost of a successful attack. Risk assesses what is at stake if an application is compromised, or a data center is damaged by a hurricane or some other event or attack.

Runtime application self-protection. Tools that combine elements of application testing tools and application shielding tools to enable continuous monitoring of an application.

Static application security testing. Testing methodology that analyzes application source code for coding and design flaws and security vulnerabilities.

Threat. Anything that can cause damage to a system or application. Threats can be natural events like earthquakes or floods, or they can be associated with a person's actions. Unintentional threats occur when a person's actions are not intended to cause harm. Intentional threats occur because of malicious activity. Threats exploit vulnerabilities.

Vulnerability. A flaw or bug in an application or related system that can be used to carry out a threat to the system. If it were possible to identify and remediate all vulnerabilities in a system, it would be fully resistant to attack. However, all systems have vulnerabilities and, therefore, are attackable.

Web application firewall (WAF). A common countermeasure that monitors and filters HTTP traffic. WAFs examine web traffic for specific types of attacks that depend on the exchange of network messages at the application layer.

Application security is a critical part of software quality, especially for distributed and networked applications. Learn about the differences between network security and application security to make sure all security bases are covered. Also, discover the differences between SAST, DAST and IAST to better understand application security testing methodologies.