Getty Images/iStockphoto

Where requirements-based tests fit in software testing

Requirements-based testing has its upsides and downsides, and it's not right for every software project. But certain dev teams should still adopt the method. Learn which ones.

Requirements-based testing is a preliminary test strategy that's used to validate system requirements. It was popularized in the early years of software development, particularly within development teams that used Waterfall methods.

Waterfall dates back to the 1970s and uses a linear, stage-based approach to software design. The first stage involves requirements gathering and documentation. The requirements from this stage also serve as the test cases for requirements-based testing.

Let's examine the intricacies of requirements-based testing, and whether the method is still helpful in current software development practices.

The requirements

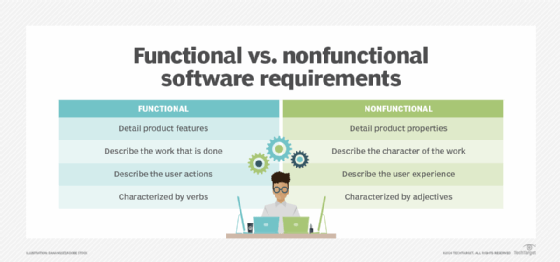

In an application, an example functional requirement can be, "The system shall allow a user to delete their account." With that requirement, a developer can easily run a test case by creating an account and testing to see if the user can delete that account.

Nonfunctional requirements are equally important to test. Some nonfunctional requirements to test include:

- if the system can handle the load;

- how quickly a user can complete an action within the system; and

- how quickly the system can process and return data.

Requirements-testing confirms the software's functionality at a surface level, based on requirements gathered at the beginning of a software project.

Requirements-based testing today

Agile processes such as Scrum, sprint and planning poker have largely replaced requirements-based testing in modern software development because of the move away from a Waterfall approach.

Waterfall encourages strict deadlines and well-defined, concrete stages that feed into one another --whereas Agile focuses on providing customer value earlier in the development process and shrugs off any notion of a requirement-heavy planning stage. Agile defines only a minimum set of requirements to get something in front of the customer as quickly as possible.

When compared to a detailed requirements-heavy outline in Waterfall, development teams use requirements-based testing much less often now.

Requirements-based testing pros and cons

In Waterfall, requirements-based testing has the advantage of being well-defined and time-boxed, which makes testing easier to plan. Developers can use their knowledge of the number of requirements that will define the test suite to more accurately estimate how long it will take to test the software. In the same way, it's easier to design tests when developers know about the explicitly defined requirements from the early stages of a Waterfall project. So, if testers map tests back to a project's requirements (using a software requirements document), they can conceivably measure test coverage.

Requirements-based testing, however, can easily miss important parts of software quality. For instance, requirements can be too brief and may have trouble capturing important elements of software design. Requirements, for example, that "software be intuitive to use" or that "a system be secure" should be defined in lot of detail. Such requirements can often be more easily covered with a manual exploratory session.

As such, teams can turn to exploratory testing to prevent headaches associated with detailed, strict requirements. Often a team's understanding benefits from testers just putting the software system in front of a user and observing their behaviors during certain use cases.

Are requirements-based tests right for your project?

Output is one of the most important factors that developers should consider when they contemplate requirements-based testing for their software project. These tests generate a logical and well-defined output that a team can map to requirements. The tests will pass or fail depending on whether the software meets the given requirement.

Other types of testing -- like exploratory testing -- are much more open-ended. When testing in an exploratory manner, there are certain test goals, but there often aren't specific requirements for testers to refer to. For example, a tester's goal might be to break a system with bad input. In an exploratory session for this scenario, the tester would try to press buttons many times and enter unexpected inputs. An exploratory session wouldn't generate test results that you would see if you ran a test suite, but it may be an easier way to find and document bugs within a system than stricter requirements-based testing.

Other types of tests can enable a team to move quicker and release more features, but the detailed results from requirements-based testing can be useful for project stakeholders to quickly understand a project's status and remaining timeline.

Requirements-based testing may be a bit dated, but it's still worth your consideration. If the project has customers that require highly specific needs and detailed documentation, requirements-based testing may fit the bill to evaluate the system's success. If your project is more open-ended and it features iteratively designed systems that prioritize agility and delivery to the customer, then requirements-based tests won't be the right method to follow.