Comparing Waterfall vs. Agile vs. DevOps methodologies

The software development process can be organized around a variety of methodologies, each with its own advantages and disadvantages. Is your team on the right path?

Software development practices are continuously evolving. To deliver quality software in a manner that works for your organization, let's compare Waterfall, Agile and DevOps.

Waterfall basics

waterfall is a traditional, plan-driven practice for developing systems. One of the earliest software development lifecycle (SDLC) approaches, it is a practice of developing in stages: Gather and analyze software requirements, design, develop, test and deploy into operations. The output of one stage is required to initiate the next stage.

Agile basics

Agile software development practices have been in use since the early 1990s, born out of the need to adapt and rapidly deliver product. The Agile methodology allows for exploration of new ideas and quicker determinations about which of those ideas are viable.

Additionally, Agile methods are designed to adapt to changing business needs during development. There are two primary frameworks used in Agile: Scrum and Kanban. Key components of Scrum are iterations and velocity, while a key point with Kanban is its work in progress status.

DevOps basics

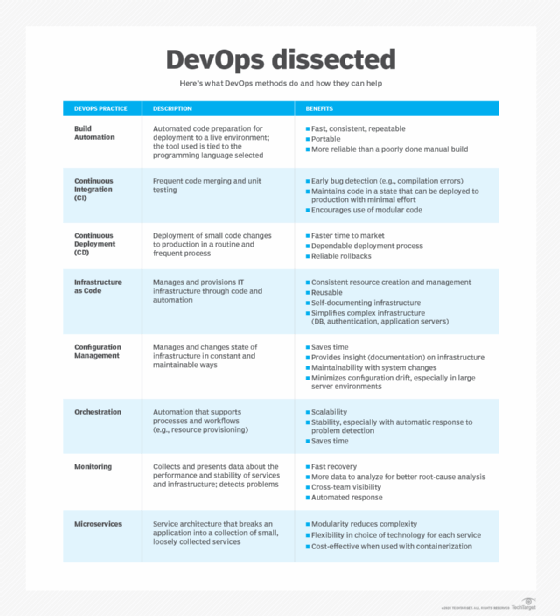

DevOps is an engineering culture that aims to unify development and operations in ways that lead to more efficient development. When done effectively, DevOps practices result in faster, more dependable software releases that are aligned to business operations. It is not a standard or framework, but instead an organizational collaboration that has given rise to a set of best practices through continuous integration, continuous delivery and continuous testing.

DevOps merges development and operations into one team that's focused on speedy delivery and stable infrastructure. Its goals include:

- fast delivery of completed code to production;

- minimal production failures; and

- immediate recovery from failures.

Key differences between Waterfall, Agile and DevOps

Waterfall is best used on software development projects that are well defined, predictable and unlikely to significantly change. This usually applies to simpler, small-scale projects. Its sequential nature makes it largely unresponsive to adjustments, so budgets and delivery timelines will be affected when business requirements change during the development cycle.

Waterfall projects have a high degree of process definition, little or no variability in output and they do not accommodate feedback during the development cycle.

Agile methods are based on iterative, incremental development that rapidly delivers a viable business product. Incremental development breaks the product into smaller pieces, building some of it, assessing and adapting. Agile projects do not start with complete upfront definitions; variability through development is expected. And, importantly, continuous feedback is built into the development process. Their inherent adaptability allows them to flexibly realign product development, and it's often cheaper to adapt to user feedback based on building something fast than it is to invest in trying to get everything right up front.

While Agile brings flexibility and speed to development, the practices that really accelerate delivery of scalable, reliable code are in the DevOps realm.

DevOps is all about merged teams and automation in Agile development. Agile development can be implemented in a traditional culture or in a DevOps culture. In DevOps, the devs do not throw code over the wall as in a traditional dev-QA-ops structure. With a DevOps arrangement, the team manages the entire process.

Can Waterfall, Agile and DevOps coexist? Should they?

DevOps practices can be joined in part with Waterfall development. For example, the development team can use tools to automate the build. The siloed, staged nature of Waterfall however, means most DevOps practices are not applicable.

DevOps culture grew out of Agile and helps to speed time to market. If your team and project use Agile development, you should consider DevOps practices based on:

- the size of your project;

- the skill set of your team members;

- your organizational structure and ability to work to modify that structure and culture as necessary;

- whether you operate in the cloud or host locally; and

- IT budget.

DevOps requires a toolchain. While plenty of tools are open source, an organization that wants to establish a DevOps culture will face costs and challenges getting started.

Multiple teams can use some DevOps practices without employing a DevOps culture. Consider the following examples:

- A team developed siloed code bases for each client, based on a nonscalable architecture and via traditional dev/QA/ops manual deployments. However, the group used application performance monitoring to support operations and customer success.

- Another team used a Scrum framework for development, employed a microservices architecture, automation (Maven) and CI (Jenkins/JUnit), operated in the cloud (AWS) using containers (Docker), but did not implement configuration management, monitoring or orchestration. So, even though the build was automated, unit tested and merged into the proper branch, the code's QA testing was done manually. The code was then given to operations for deployment. Why would an organization do this? In this case, the team chose to build an entirely new system and staff at the same time. The engineering group, which was established first, had experience with some DevOps tools but lacked the expertise to establish the other elements of the DevOps culture. This scenario resulted in animosity between dev and ops; they were not one team with the same set of goals. When they progressed to implement configuration management and orchestration, they had a hard time agreeing on the tools to use.

In practice, even a little bit of automation in software development and operations is better than none. It helps achieve consistency, scalability, reliability, reusability and potential cost savings. More automation drives faster time to market and more reliable systems. The fastest and most stable systems will come from totally autonomous build pipelines, where code decides if a build is good enough.

Remember, DevOps is a single team with the merged goals of speed and stability. While an organization will need to learn what DevOps truly is -- and isn't -- a proper implementation can lead to happier, more innovative teams and more satisfied customers.